Let’s be honest—if you’ve attended any technology conference or scrolled through LinkedIn in the past year, you’d think AI is the only thing happening in IT. While artificial intelligence is undeniably transformative, there’s a massive architectural renaissance happening beneath the surface that’s getting far less attention than it deserves.

The physical and logical infrastructure required to support the modern digital economy is undergoing fundamental changes. We’re confronting the thermodynamic limits of silicon, the boundaries of traditional memory architectures, and navigating increasingly complex geopolitical frameworks that dictate where data can live and who can access it.

In this deep dive, we’ll explore the infrastructure trends that will shape enterprise IT from 2026 through 2030—trends that strategic leaders can’t afford to ignore while everyone’s distracted by the AI conversation.

The Memory Wall and the Rise of Disaggregated Compute

Here’s a problem that doesn’t get enough airtime: we’ve hit the memory wall. Modern enterprise workloads are increasingly constrained not by processing power, but by the speed at which data can be fed to processor cores. Traditional architectures that bolt memory directly to the motherboard simply can’t scale to meet demand.

Enter Compute Express Link (CXL), particularly the new 4.0 specification released in late 2025. CXL fundamentally decouples memory from the physical motherboard, enabling cache-coherent connectivity between processors, accelerators, and massive external memory pools.

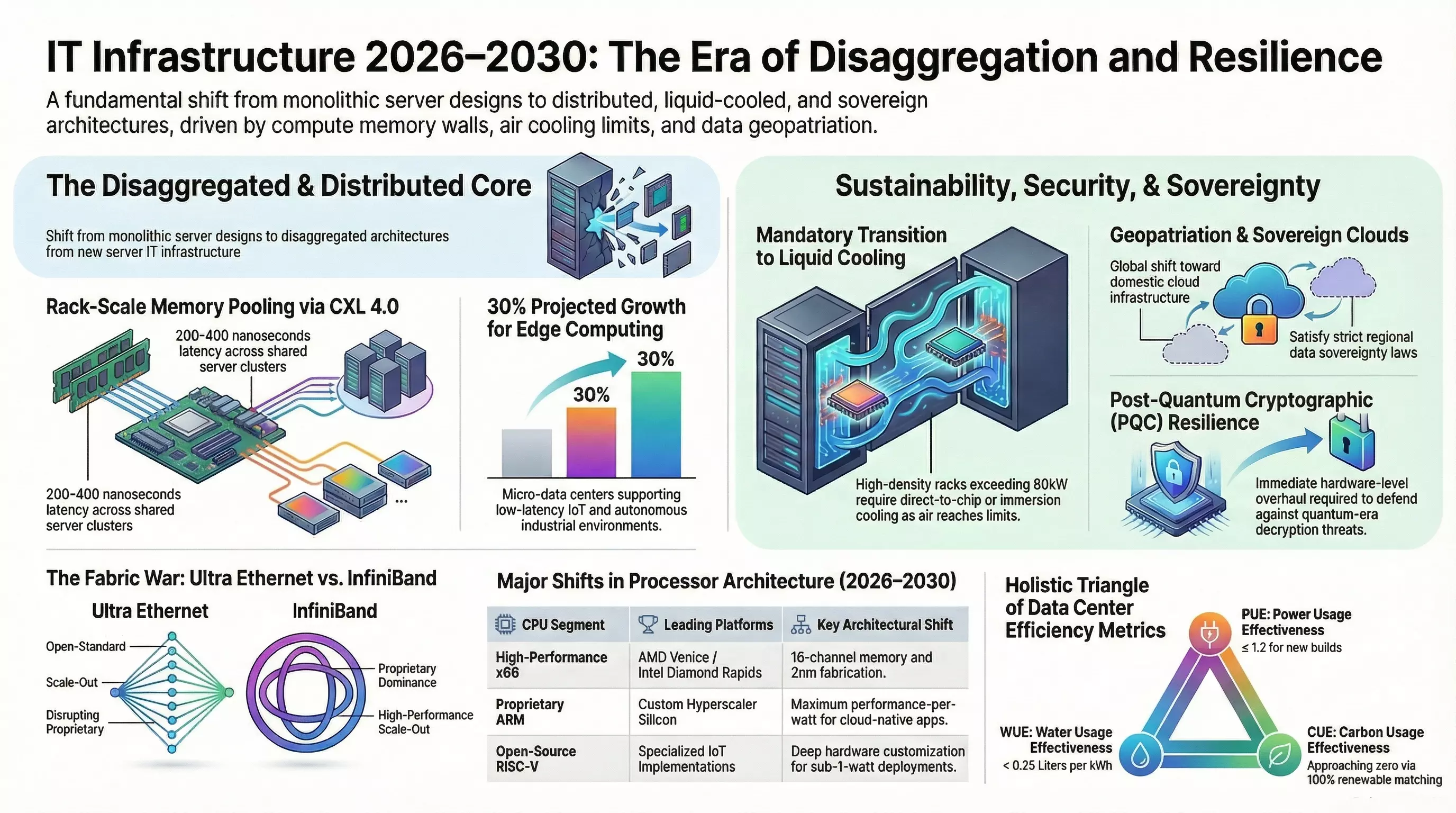

The implications are staggering. Historically, deploying large-scale in-memory databases or analytics engines required distributing data across dozens of servers because no single machine could hold enough RAM. This forced data to traverse networks, introducing latency penalties of 1 to 500 microseconds depending on the protocol. With CXL 4.0, server clusters can access centralized rack-scale memory pools exceeding 100 terabytes—with access latencies dropping to 200-400 nanoseconds.

Yes, that’s still slightly slower than directly attached DRAM, but it completely eliminates the microsecond-level network bottlenecks that have historically crippled scale-out applications.

A word of caution: the software ecosystem hasn’t fully caught up. While hypervisors like vSphere 8.0 U2 have added experimental CXL pooling capabilities, they remain uncertified for mission-critical production environments. Expect to invest significant effort in compatibility testing and orchestration tooling as this space matures.

On the processor front, we’re seeing a fascinating three-way race between architectures. AMD’s EPYC Venice is pushing toward 256 cores per socket on 2nm fabrication, while Intel has pivoted entirely to 16-channel memory architectures for Diamond Rapids after canceling their 8-channel variants—a direct acknowledgment of the insatiable memory bandwidth demands of modern workloads.

But the real story might be the diversification of instruction set architectures. ARM has successfully transitioned from mobile dominance into the enterprise data center, with hyperscalers developing proprietary ARM processors optimized for specific workloads. Meanwhile, RISC-V is emerging as a fully open-source ISA that promises unprecedented customization for edge and IoT deployments—some implementations consuming less than a single watt compared to ARM’s four.

Storage Fabrics and the Death of Direct-Attached Everything

The storage world is experiencing a parallel transformation. The global datasphere is projected to grow from 173 zettabytes in 2024 to over 527 zettabytes by 2029. Traditional direct-attached storage creates severe stranded capacity issues where isolated servers exhaust local limits while neighboring nodes sit idle with unutilized drives.

NVMe over Fabrics (NVMe-oF) has moved from high-performance computing luxury to a standard architectural requirement. By extending the NVMe protocol across high-speed networks, compute nodes can access remote storage pools with latencies practically indistinguishable from local drives.

The choice of transport layer involves real trade-offs. RDMA over Converged Ethernet (RoCE) offers near-zero microsecond latency but requires meticulous network tuning with Priority Flow Control to create lossless environments. NVMe over TCP uses standard Ethernet infrastructure—slightly higher latency and CPU overhead, but drastically reduced deployment complexity. For many organizations, that simplicity wins.

Perhaps more interesting is the emergence of computational storage drives that embed processing directly onto the storage device itself. Samsung’s SmartSSD integrates AMD FPGAs alongside NAND flash, enabling database filtering, encryption, and transcoding to occur where the data lives. You’re not moving terabytes across networks anymore—you’re returning only the refined results you actually need.

The Mandatory Transition to Liquid Cooling

Traditional data center designs were engineered for 5-10 kilowatt racks. Today’s compute nodes featuring high-core-count CPUs and next-generation accelerators are pushing rack densities into the 10-30 kW range routinely, with high-performance clusters exceeding 80 kW and approaching 100 kW per rack.

At these power levels, air cooling hits absolute thermodynamic limits. Forced air simply cannot remove heat efficiently enough when individual processors consume over 1,000 watts. The industry is undergoing a mandatory transition to liquid cooling—projections indicate 40-50% of data centers will integrate some form of liquid cooling technology within the next few years.

Two primary approaches are emerging. Direct-to-chip (DTC) cooling circulates engineered coolant through cold plates mounted against processor surfaces—liquid is approximately 1,000 times more effective at absorbing and transporting heat than air. Immersion cooling takes this further, fully submerging entire server chassis in non-conductive dielectric fluid baths. While immersion offers unparalleled thermal stability and the highest possible rack densities, the weight of the fluid and specialized structural requirements present significant operational challenges.

Edge Computing Comes of Age

The centralization of data in hyperscale facilities fundamentally conflicts with low-latency requirements for industrial applications, autonomous logistics, and remote healthcare. The micro data center market, valued at $6.18 billion in 2024, is projected to grow at nearly 19% annually as organizations push compute closer to data sources.

These aren’t your grandfather’s server closets. Modern micro data centers offer the full capabilities of traditional multi-megawatt facilities—integrated power, liquid cooling, fire suppression, physical security—condensed into modular 20-40 rack unit form factors. Prefabricated and factory-integrated, they compress construction schedules dramatically compared to the 18-24 month delays plaguing traditional data center construction due to power grid constraints and supply chain bottlenecks.

Europe alone had over 1,836 active edge nodes by 2024, concentrated in Germany, France, and the Netherlands to support localized healthcare and public-sector workloads. This proliferation is accelerating as organizations discover they can bypass the high capital expenditures and unpredictable egress costs of shipping massive IoT datasets back to centralized clouds.

The Network Fabric Wars: Open Standards vs. Proprietary Performance

Within the data center core, a fierce architectural battle is unfolding between established InfiniBand protocols and the newly standardized Ultra Ethernet architecture.

InfiniBand has been the undisputed leader in high-performance computing interconnects, achieving sub-two-microsecond latencies with guaranteed zero packet loss through hardware-level credit-based flow control. But it’s a proprietary, heavily vendor-controlled ecosystem—exactly what many enterprises want to avoid.

The Ultra Ethernet Consortium launched the UEC 1.0 Specification in mid-2025 as a ground-up reconstruction of the Ethernet stack to achieve InfiniBand-like performance while retaining Ethernet’s vast open ecosystem and multi-vendor interoperability. Traditional Ethernet protocols struggle with incast congestion and rely on reactive packet drop mechanisms that destroy performance in highly synchronized parallel computing. UEC introduces modern RDMA over IP with proactive congestion management, plus sophisticated multi-pathing techniques like deep packet spraying across all available links.

At the wireless edge, Wi-Fi 7 is seeing mainstream adoption with nearly 59% of IT organizations initiating upgrades in 2026. The technology delivers massive throughput through pristine 6 GHz spectrum and ultra-wide 320 MHz channels. Meanwhile, 5G-Advanced implementations are introducing native support for high-precision indoor positioning and Time-Sensitive Networking—transforming cellular networks into intelligent spatial sensors capable of tracking industrial assets with sub-meter accuracy inside concrete structures.

And on the horizon? Global standard-setting bodies are laying groundwork for 6G technologies expected around 2030, exploring sub-terahertz frequencies from 100 GHz to 3 THz that offer theoretical terabit-per-second bandwidths—though with extreme propagation challenges that will require innovative solutions like Reconfigurable Intelligent Surfaces to overcome.

Security Beyond Software: Hardware Roots of Trust

As infrastructure becomes more disaggregated and decentralized, traditional software-based security perimeters have dissolved entirely. Enterprise security architecture in 2026 is characterized by hardware-level enforcement and profound cryptographic agility.

The rapid maturation of quantum computing represents an existential threat to modern asymmetric encryption. Cryptographically relevant quantum computers will eventually break current public-key infrastructure using algorithms like Shor’s. Threat actors are already employing “harvest now, decrypt later” tactics—stealing encrypted data today with the intention of unlocking it once quantum capabilities mature.

NIST finalized the first suite of post-quantum cryptography algorithms in 2024, and implementation is now a hardware challenge. These PQC algorithms require significantly larger key sizes and more intensive mathematical processing. Semiconductor manufacturers are deploying FPGAs with built-in CNSA 2.0-compliant post-quantum support and crypto-agility, allowing network infrastructure to perform complex encryption at line-rate speeds.

Hardware Root of Trust mechanisms are becoming ubiquitous. Trusted Platform Modules verify that hardware, firmware, and boot sequences haven’t been compromised. Confidential Computing extends this into virtualized environments—encrypting data while it’s actively in use, isolating virtual machines from the underlying hypervisor and cloud provider administrators entirely.

Sustainability Mandates Get Teeth

The era of voluntary environmental reporting is ending. Global regulatory bodies are enforcing strict, mandatory sustainability legislation with real consequences.

The EU’s recast Energy Efficiency Directive imposes rigorous reporting obligations on any data center exceeding 500 kW IT power demand. Operators must submit precise performance indicators—energy consumption, water footprint, waste heat reuse—into a centralized, publicly accessible European database.

The industry is moving beyond simple Power Usage Effectiveness (PUE) to a holistic sustainability triangle. Water Usage Effectiveness (WUE) quantifies liters consumed per kilowatt-hour. Carbon Usage Effectiveness (CUE) tracks absolute emissions relative to computational output. German regulations now mandate that new data centers achieve PUE of 1.2 by July 2026 and an Energy Reuse Factor of at least 10%—legally requiring operators to divert waste heat toward district heating, hospitals, or schools.

Compliance without sacrificing performance is driving innovation: localized carbon capture, AI-driven cooling systems that eliminate overcooling, and strategic workload migration to regions with abundant zero-carbon power generation.

Geopatriation and the Rise of Sovereign Cloud

Perhaps the most underappreciated trend is the geopolitical fragmentation of cloud infrastructure. Rising global tensions, restrictive data privacy legislation, and fears of extraterritorial data access have catalyzed massive investment in sovereign cloud environments.

“Geopatriation”—the deliberate relocation of workloads from hyperscale public clouds to localized, sovereign environments—is accelerating dramatically. European spending on sovereign cloud IaaS is forecast to nearly double from $6.8 billion in 2025 to over $12.5 billion in 2026, an 83% year-over-year growth rate. Similar aggressive growth is occurring across the Middle East, Africa, and Asia-Pacific.

For enterprise architects, this introduces severe complexity. Global organizations can no longer rely on homogenous cloud architectures. They must design fragmented, hybrid architectures that blend multi-tenant public cloud services for benign applications with tightly controlled on-premises micro data centers and regional sovereign clouds for critical intellectual property and personally identifiable information.

What This Means for Strategic Leaders

The infrastructure transformation happening beneath the AI hype is profound and demands attention. Here’s what strategic technology leaders should be doing now:

Build disaggregation competency. Start piloting CXL 2.0/3.1 capable platforms today to understand memory pooling use cases before multi-rack CXL 4.0 fabrics mature in 2027-2028.

Plan for liquid cooling. If you’re designing new facilities or refreshing compute infrastructure, assume liquid cooling requirements. The density demands are only accelerating.

Evaluate your edge strategy. Determine which workloads genuinely need low latency versus cloud flexibility. Micro data centers can bypass traditional construction delays and cloud egress costs.

Embrace open standards. The Ultra Ethernet vs. InfiniBand battle is a referendum on vendor lock-in. Position your organization to benefit from open ecosystem interoperability.

Start the post-quantum transition. The “harvest now, decrypt later” threat is real. Hardware crypto-agility and PQC-ready infrastructure should be procurement requirements today.

Map your sovereignty exposure. Understand where your data lives, which jurisdictions have legal authority over it, and build the hybrid architectures necessary to comply with diverging regulatory frameworks.

The organizations that recognize these infrastructure shifts—rather than dismissing them as plumbing while chasing AI headlines—will build the resilient, scalable, compliant foundations that actually enable competitive advantage in the years ahead.

The future isn’t just about what we compute. It’s about how and where we compute it.