If you’ve ever deployed a large language model in production, you know the pain: massive memory footprints, expensive GPU time, and the constant tension between model quality and inference cost. Google Research just dropped something that might fundamentally change this calculus—a compression algorithm called TurboQuant that achieves near-theoretical-optimal compression with zero accuracy loss.

This isn’t incremental improvement. This is 6x memory reduction while maintaining perfect performance on long-context retrieval tasks. Let me break down what makes this significant and why it matters for anyone building AI systems.

The Core Problem: Vectors Are Expensive

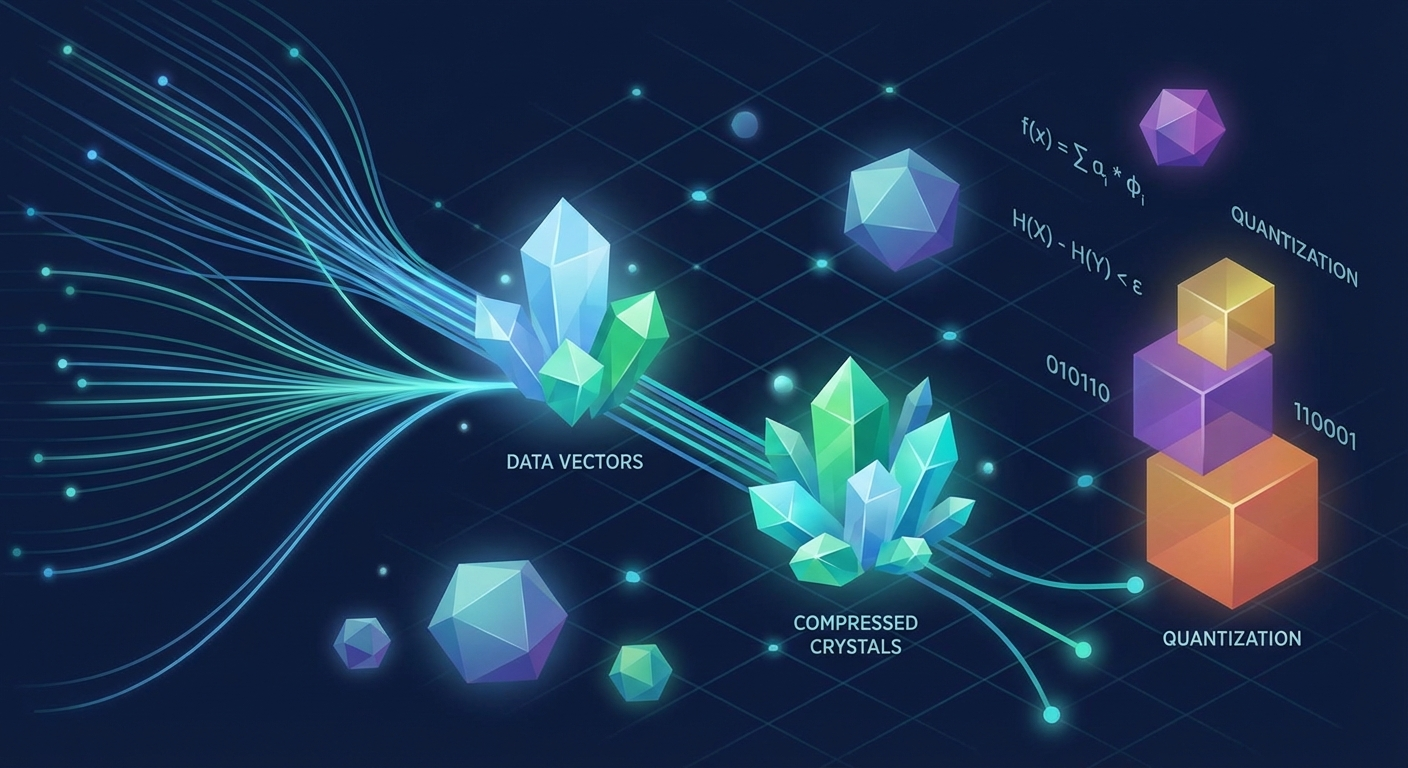

Everything in modern AI reduces to vectors. When you ask an LLM a question, your query becomes a high-dimensional vector. The model’s memory of previous tokens? Also vectors, stored in what’s called the key-value (KV) cache. Vector databases powering semantic search? Billions of vectors.

The problem is that high-dimensional vectors consume enormous amounts of memory. For LLMs, the KV cache grows linearly with context length—every new token requires storing more vectors. This creates a fundamental bottleneck: longer contexts mean more memory, more memory means slower inference, and slower inference means higher costs.

Vector quantization—compressing these vectors by reducing their precision—is the obvious solution. But traditional approaches hit a wall. Most quantization methods require storing additional “quantization constants” for every block of data, which adds 1-2 extra bits per number. When you’re trying to compress to 3-4 bits total, that overhead is devastating.

How TurboQuant Works

The TurboQuant paper (to be presented at ICLR 2026) introduces a two-stage approach that eliminates this overhead entirely.

Stage 1: PolarQuant for High-Quality Compression

The first stage uses a technique called PolarQuant that randomly rotates input vectors before quantization. This rotation is the key insight—it transforms any arbitrary input into vectors where each coordinate follows a predictable Beta distribution.

Why does this matter? Because when you know the distribution of your data, you can design optimal quantizers for it. TurboQuant precomputes optimal Lloyd-Max quantizers for Beta-distributed coordinates, which means each coordinate can be quantized independently without coordination overhead.

The geometric intuition is elegant: instead of describing a vector as “go 3 units on X, 4 units on Y,” PolarQuant converts it to “go 5 units at a 37-degree angle.” This polar representation maps naturally onto a fixed, predictable grid, eliminating the need for expensive data normalization.

Stage 2: QJL for Error Correction

Here’s the subtle problem with MSE-optimal quantizers: they introduce bias in inner product estimation. If you’re using compressed vectors for attention scores or similarity search, this bias accumulates and degrades results.

TurboQuant’s solution is a second stage using Quantized Johnson-Lindenstrauss (QJL)—a mathematical technique that takes the tiny residual error from stage one and encodes it in just 1 additional bit per coordinate. The result is an unbiased inner product estimator with negligible computational overhead.

This two-stage architecture is what lets TurboQuant hit the theoretical floor. The paper proves that TurboQuant operates within a factor of 2.7 of the information-theoretic lower bound—Shannon’s limit on achievable compression. For low bit-widths (1-2 bits), this factor drops to just 1.45.

The Numbers That Matter

The experimental results are striking:

KV Cache Compression:

- 3.5 bits per channel: Absolute quality neutrality (zero accuracy loss)

- 2.5 bits per channel: Marginal quality degradation

- Perfect needle-in-a-haystack retrieval at 6x+ memory reduction

- Up to 8x performance increase on attention logit computation (tested on H100 GPUs)

Vector Search:

- Superior recall ratios compared to Product Quantization (PQ) and RabbiQ baselines

- Near-zero indexing time (data-oblivious, no preprocessing required)

- Works across all tested high-dimensional datasets without tuning

Long-Context Benchmarks:

- Perfect scores on needle-in-haystack tasks (finding specific information in massive context)

- High performance maintained on LongBench, ZeroSCROLLS, RULER, and L-Eval

- Tested on Gemma and Mistral models

The “data-oblivious” property deserves emphasis. Unlike traditional product quantization that requires expensive k-means clustering during indexing, TurboQuant applies instantly to any vector. This makes it suitable for online applications where vectors arrive in real-time—exactly the scenario you face with KV cache compression during inference.

Why This Matters for Production AI

Three implications stand out:

1. Longer Context Windows Become Practical

The KV cache is the primary memory constraint for long-context inference. If you can compress it by 6x without accuracy loss, you can serve 6x longer contexts on the same hardware—or serve the same contexts at 6x lower cost. For applications like document analysis, code completion, or multi-turn conversations, this is transformative.

2. Vector Search Gets Cheaper and Faster

Vector databases are expensive because they store billions of high-precision vectors. TurboQuant’s ability to maintain recall while compressing to 3-4 bits means you can store 8-10x more vectors in the same memory footprint. Combined with the near-zero indexing time, this could significantly reduce the cost of RAG (retrieval-augmented generation) systems.

3. Edge Deployment Opens Up

Memory constraints are even more severe on edge devices. A compression technique that requires no preprocessing and maintains accuracy at 3 bits per channel makes it feasible to run larger models on smaller hardware—phones, embedded systems, and IoT devices that were previously out of reach.

The Theoretical Foundation

What separates TurboQuant from engineering heuristics is its theoretical grounding. The paper provides formal proofs of information-theoretic lower bounds on achievable distortion, then demonstrates that TurboQuant approaches these bounds.

The key theoretical results:

- MSE Distortion Lower Bound: Any quantizer must have distortion ≥ 1/4^b for bit-width b

- TurboQuant MSE Distortion: ≤ (√3π/2) × 1/4^b ≈ 2.7 × the lower bound

- Inner Product Distortion: Similar near-optimal guarantees for attention-style operations

This isn’t just impressive compression—it’s provably near-optimal compression. You cannot do significantly better without violating information theory.

Integration with Google’s AI Stack

TurboQuant doesn’t exist in isolation. Google explicitly mentions applying these techniques to Gemini’s KV cache bottlenecks, and the work connects to their broader vector search infrastructure powering semantic search at scale.

The paper also introduces two supporting techniques that may be useful independently:

- QJL (Quantized Johnson-Lindenstrauss): A standalone 1-bit quantization method for inner products

- PolarQuant: The rotation-based compression that simplifies downstream quantization

Both are being presented at major conferences (QJL at AAAI 2025, PolarQuant at AISTATS 2026), suggesting Google sees these as foundational contributions beyond the specific TurboQuant application.

What’s Next

The ICLR 2026 presentation should bring more implementation details and potentially open-source code. For now, the implications are clear: if you’re working on LLM inference optimization, vector databases, or edge AI deployment, TurboQuant’s techniques are worth understanding.

The core insight—that random rotation induces predictable distributions amenable to optimal scalar quantization—is simple enough to implement experimentally. The QJL residual correction adds complexity but addresses a real limitation of naive quantization approaches.

Whether this specific algorithm becomes industry standard or gets absorbed into vendor implementations, the broader message is that we’re approaching theoretical limits on vector compression. The overhead that seemed inevitable a few years ago can, in fact, be eliminated. That’s the kind of fundamental progress that reshapes what’s possible.

For the full technical details, see the TurboQuant paper on arXiv and Google Research’s announcement.